Experiments that actually ship. Every month.

Adi designs, builds, and validates every experiment himself — no developer handoff, no implementation lag, no recommendations that sit in a document. Scope is customised per client at the start of each engagement.

Exact scope and deliverables are agreed in writing before the engagement begins — no ambiguity mid-month. The items below represent a typical retainer. Actual scope depends on your store's current state and priorities.

Experiment Hypothesis & Variant Design

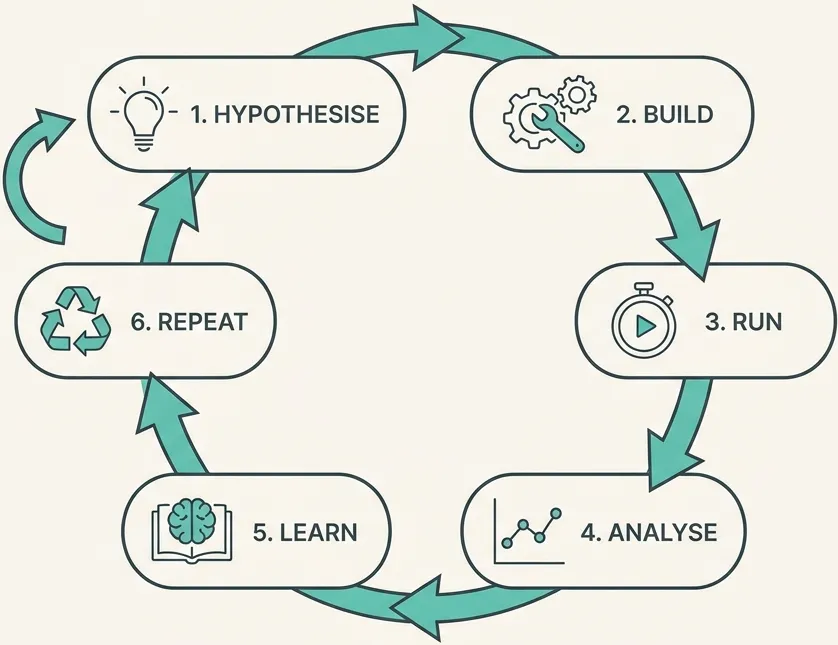

2 to 4 experiments per month, each starting with a data-grounded hypothesis — not intuition, not competitor copying. Variant design is informed by session recordings, heatmaps, and quantitative drop-off data.

Full Implementation

Adi builds every variant via A/B testing tools or custom JavaScript. No developer queue, no sprint planning, no waiting. When it's ready to test, it gets built and launched.

Server-Side Tracking

Server-side event tracking configured and maintained throughout the engagement — accurate data that isn't degraded by ad blockers or browser restrictions.

GA4 Tracking & Experiment Validation

Experiments are tracked correctly or they don't run. Every test includes event validation, goal configuration, and sanity checks before traffic is sent to variants.

Post-test Analysis & Learnings

Statistical significance checks, segment analysis, documented learnings. Every test result — win, loss, or inconclusive — informs the next hypothesis in the roadmap.

Analytics Maintenance & Fixes

Tracking breaks over time — new pages, Shopify updates, GTM drift. Ongoing maintenance is included. The data stays clean throughout the engagement.

Qualitative Insight Reviews

Regular review of heatmaps, session recordings, and form analytics. Qualitative signals often surface the next experiment before the quantitative data catches up.

Strategy Calls & Async Slack Support

Regular calls to align on priorities, review results, and plan the next sprint. Async Slack for questions, context, and quick decisions between calls.

Number of active experiments per month

More concurrent tests require more design, build, and analysis time. Scope is capped per engagement to maintain quality and statistical validity.

Implementation complexity per variant

A copy-only test on a text element is a different scope from a restructured PDP with custom dynamic logic. Complexity affects both build time and tracking requirements.

Analytics infrastructure condition at start

If tracking is clean and experiments are set up correctly, full velocity is immediate. If it needs significant remediation, that affects early month bandwidth.

Breadth of funnel covered

PDP-only optimisation is a narrower scope than full-funnel coverage (PDP, cart, checkout, post-purchase, email click-through). The latter compounds faster but costs more month-to-month.

No mid-month surprises

Scope and deliverables are agreed in writing before the engagement begins. If something comes up that's outside scope, it's discussed — not silently billed.

After the 3-month minimum, the engagement continues month-to-month. You can exit with one month's notice. No lock-in beyond the initial commitment.

Tracking audit & baseline

If no prior audit exists, the first priority is verifying the analytics foundation. Clean data before any experiment runs.

Qualitative & quantitative analysis

Session recordings, heatmaps, funnel drop-off, behavioural segmentation. Evidence for the first set of hypotheses.

First experiments live

The first 1–2 experiments are designed, built, and launched. Tracking validated before traffic is assigned to variants.

Analysis & next sprint

Initial results reviewed, learnings documented, next hypotheses in the roadmap framed. Cadence is established for the ongoing engagement.

The most direct path to experiment velocity

Start with the audit. The roadmap becomes the retainer.

The audit fee credits in full toward the first month. No wasted spend.